Added Friday, February 24th, 2012 @ 10:24 PM

Kinect This! is a video game I made that uses the Xbox 360 Kinect for controlling the four “mini” games that are part of Kinect This! This was the next logical step to my virtual/augmented reality efforts preceding it that used various Wii controllers/accessories. I had looked into using a Kinect for those projects, but 1) it hadn’t been released yet for some the earlier projects, 2) there wasn’t a whole lot of working support for it right after it was released, and 3) I wasn’t able to get my hands on one for a while after its release. I’m glad I finally did though! It was a ton of fun to work on, and everyone who played the games at the art show I presented it at really, really liked it :). Check out the videos above (and below) to see the games in action!

There are (if I remember correctly) two to four building textures in Smash City that some friends of mine were nice enough to make for the game. And while I did use a plugin to get the Kinect to work with Unity, everything else was created by me.

The source code for the games can be downloaded here (.zip).

Added Tuesday, February 21st, 2012 @ 10:37 PM

YouAre was my senior project and is part of the WiiMersioN Project. In fact, it was a culmination of everything I had learned working with different Wii controllers in my other projects and other parts of the WiiMersioN Project.

Rather than type a lot here about the project and have you read everything twice, I’ll simply link to my lengthy original documentation for the project.

Added Tuesday, February 21st, 2012 @ 10:35 PM

YouMove was a semester-long project for my installation class and is part of the WiiMersioN Project.

YouMove is a Unity game that is controlled with the Nintendo Wii Balance Board. The player moves the character by leaning in different directions while standing on the Balance Board. The red dot onscreen shows the player where he or she is putting his or her weight (to help visually connect one’s own motion to the character’s). The red dot is also used as a cursor to select onscreen options. Leaning on the Balance Board also causes the character to change the angle it’s looking. Put simply, the Balance Board is used to control both movement and the camera (at the same time). Because of this, players must move in short “bursts” if they wish to be able to see where they’re going (otherwise they’d be looking at the ground while moving). I don’t remember what the reason for this was, but I do remember that it was very intentional.

Players navigate in this manner through the Fine Arts buildings of the university. Or rather, they can try. For you see, after the character touches a certain number of objects (walls, tables, doors, anything) the buildings start to collapse. Eventually, as bigger and bigger pieces of the buildings’ structures start to collapse, the player can fall through the floor, down to a canyon below, at which point the entire complex collapses and falls down with the player.

I set the Balance Board up on the desks of the digital media student’s workspace (specifically, my desk). I then projected the game through the window in front of the desk and down onto the roof of the wood shop below. With this set up, the player would be standing in a very high place, on the “edge” of the building, looking down, causing a sense of imbalance (from a safe place thanks to the window). In order to see the projection, I presented the project at night.

Here is my (lengthy) original documentation of the project:

The main thing that was in the back of my head throughout the entirety of the project was that I wanted to use the Wii Balance Board somehow. This is something that I’ve wanted to do for a while now, but haven’t yet had the chance to do it.

Since sophomore year (and even some before then), I have been messing around with different Wii peripherals and using them outside of the context of the Wii. For example, one of the first projects I did used the Wii remote (Wiimote) as a movement detection device. The Wiimote was placed inside a wooden box that was hung on the wall. Whenever someone bumped or hit the box, the Wiimote would detect the movement and trigger an animation on a nearby computer screen.

In the overall scheme of things, I focused on using and mastering the Wiimote first. I’m definitely not done experimenting with it, but the culmination of my work with the Wiimote came together in The WiiMersioN Project, my final project for my game engines class last year. In it, users would hold two Wiimotes, one in each hand, and control onscreen hands in a recursive manner to pick up and move virtual Wiimotes. The movement of one’s hands in real life was mimicked in the game.

So I had a pretty solid start to controlling virtual hands and arms; the next thing I worked on was the head. My final project for advanced experiments, AU, was a piece that used head tracking to move a virtual camera around a virtual environment. The piece implemented two Wiimotes attached to a wearable helmet and that pointed upward toward a source of infrared light. With these devices, I was able to track the rotational movement of a person’s head in three dimensions. So where ever the person looked in real life, that movement was directly translated and imitated in the virtual camera; if the person looked left, the camera looked left, if the person looked down, the camera looked down, and so on and so forth.

Now I had a basis of virtual head movement in addition to upper limbs movement. The next logical step seemed to be character movement. As I said, I had been planning on doing this for a while, and now seemed to be a great time to try it out. The Wii Balance Board seemed a perfect fit for controlling movement in the same vein as the other body parts were controlled. As a person moves his or her arms and hands, virtual hands and arms move accordingly; as a person moves his or her head, a virtual head moves accordingly; now with the Balance Board, a person could move his or her legs and/or feet, and virtual legs/feet would move accordingly. Most of the previous projects I had done with the Wii peripherals had something to do with the connection between a physical body part and a virtual one through relational movement, so I figured that this one would follow suit.

While some of my earlier works with this medium were installations/sculptures, my more recent ones were games. Because I was dealing with how to move a virtual character, that meant that I would use a game engine (Unity) in order to control a virtual camera in real time. So okay, I had something that would be presented on a screen, but that’s not much of an installation. I did some thinking –how could I take this idea and make it into an installation? On top of that, I didn’t really know what would be happening other than moving a character around. Well okay, I had a character moving, so what? Sure moving with the Balance Board is kind of interesting, but it certainly wasn’t enough.

Working off of the idea of a connection between physical and virtual, I came up with a few ideas. What if, instead of seeing from a first person view (in the game), the camera was instead from the perspective of another person –a second person view, if you will. While it still played on the idea of physical and virtual, it put a kind of spin on it. Normally, it is relatively easy to move ourselves through our surrounding environments. Our main sense, sight, has been developed to a point where we can judge depth. We have become accustomed to this. However, even when we do something as simple as covering one eye, this sense of depth is thrown off, and many people find it hard to navigate around the surrounding area. If such a minute change throws off a person’s ability to function so harshly, what would it be like to totally remove a person from his or her own body and place him or her in another person’s head? What would it be like to see yourself through another person’s perspective? On top of actually being able to see your entire body (which we almost never experience, even when looking in a mirror), how different would it be to control yourself and navigate your surroundings from a perspective different than your own? Additionally, what if the person whose eyes you were looking out of was seeing out of your eyes? Each of you would be viewing the world from each other’s perspective. If either or both of you wanted to move around, it would seem that some sort of coordination would be necessary to be at all effective. If the other person isn’t looking at you, then how will you know where you are or where you’re moving (and vice versa). I’d imagine that there would have to be quite a bit of verbal communication between both parties. In order for either of you to see where you’re going, you’d each have to look at each other, and from there, determine where you can move, and probably where the other person can move as well. More than likely there are lots of interesting things that would happen in such a set up. Even without the Balance Board, just using a camera and a pair of LCD glasses, this could be a really interesting experience, and it’s certainly something I thought about, but I really wanted to use the Balance Board; although this will probably be at least part of a future project I will create.

Well using a second-person perspective would most likely require more than one person using the equipment at a time. That’s all good and dandy, but unfortunately, I couldn’t afford getting two of everything. So how would I go about doing this as a singular experience? Perhaps a third-person perspective? In video games, a third-person camera is one that hovers outside of the character so that the player can see, say, over the character’s shoulder or maybe even see the entirety of the character’s body. If you were to recreate something like this in real life, it would be like attaching some kind of camera holster to your back; one that held the camera a few feet above your head and looked down at you. Then instead of seeing through your eyes, you’d be seeing through the perspective of this camera (through something like LCD glasses for example). So in the game, there would be a camera above the character; that’s what the user would be looking through. They would have to move the character while looking down from a slightly aerial view. However, I don’t imagine this would be all that hard to do. Sure, it’s a different way of looking at things, but it’s very easy to see where the character is in relation to its surroundings. For the most part, everything that can be seen from a first-person view can also be seen in the third-person view; in fact, even more can be seen from the third-person view than the first-person, so it could very well be that controlling a body like this would be even easier than it is normally…

This idea was okay, but it did have a few flaws in it. Could I perhaps build upon this? I then thought about using fixed camera angles. Fixed camera angles are third-person cameras that do not move with the character, whereas a normal third-person camera moves with the character. Remember the camera holster strapped to our back? Because it is attached to us, it moves with us, therefore, we’re always in the same position in the frame, and we always see what’s ahead of us for a distance, and a little bit of what’s behind us. A fixed camera is more along the lines of a series of security cameras in a building. Each camera can only see a limited area. Imagine you walk into view of one such camera; also imagine that you are, again, not seeing from your eyes, but from the view of the camera. You are only able to see what the camera sees; you can only see in front of you what’s in the frame of the camera; beyond that frame, you have no idea what’s in front of you, whether it be a wall, nothing, a person, a ledge, what have you. In addition to this, one’s sense of movement will be different. With a first-person view as well as a third-person view, holding up (for example) will move the character forward, while holding right will move the character right –the directions do not change, forward will always go forward and right will always go right. However, with a fixed camera angle, things are different. Imagine you’re still looking through the camera’s perspective. You’re facing the right side of the frame. If you were looking through a first-person view, you would be looking forward, and up would move you forward, but in the fixed-camera angle, you’re looking to the right, and holding up could do one of two things. It could (more likely) actually move you up in the frame (so you would be moving left from a first-person view), moving farther away from the camera. In this way, the direction pressed would move you in that direction in accordance to the view of the camera –up would move you up in the frame(farther away from the camera), down would move you down in the frame (closer to the camera), and left and right would move you left and right in the frame. So from a first-person view (still moving in accordance to the camera’s view), up would move you left, down would move you right, left would move you backwards, and right would move you forwards. Pretty different, right?

This seemed like a pretty good solution (at least for starters), but now I ran into a problem. Because a person would be seeing out of a third-person camera, that meant that they’d see themselves. In other words, there would have to be a virtual modeled character for the user to move around, whereas in a first-person view, the character is never seen since you’re inside the character. What I didn’t want to do was just model a character that would be used by everyone because I still wanted to have that sense of self via the physical/virtual idea. How would one come to feel a sense of himself/herself in a virtual world when the character he or she was looking at didn’t look anything like him/her? Another idea I’ve been wanting to do occurred to me. Could I generate a model of the user at runtime? In other words, could I very quickly make a 3D model of whoever was using the equipment so that the virtual character looked like him or her? Well, I could do a few things. I could implement an avatar system in which the user would design a virtual character out of a set of pre-modeled features. In this way, the user could probably create a 3D character that looked somewhat like him/her. I wanted to try to do better than that. What if I made a generic 3D model and took pictures of people before they started using the equipment? With the pictures, I could quickly apply the face to the models face, the skin tone to the model’s body, and the clothes to the model’s body. Essentially, I would be applying a 2D image as a texture for a 3D model. Maybe this could work, but it wouldn’t look all that great, and it would more than likely be harder to do (especially quickly) than I initially thought. Well what if everything was in 2D rather than 3D? If I were to do this, capturing a person’s image and recreating it virtually would probably be much easier.

For this, I was planning on setting up a small capture station where users would go before playing the game. I would have a green screen set up that the participants would stand in front of. The participants would be asked to position their bodies in certain ways and then a picture would be taken of them. So for example, each person would be asked to position their bodies in four main orientations –facing the camera, facing the right wall, facing the left wall, and facing away form the camera –this would give me four different angles to work with. A character moving up in the frame (farther away from the camera) would use the image of the person facing away from the camera, a character moving down in the frame (closer to the camera) would use the image of the person facing the camera, and so on. On top of this, I could model a skeleton in Maya and rig it for certain animations, such as walking. At the time I took the picture, I could section off different parts of the body. So for example, I would take the picture, then in (perhaps) Photoshop, I would have a template image of a body; with this template I could scale the move the different parts of the body to match those of the person whose picture was just taken. I could then crop those sections and turn them into individual images that would each be applied to the corresponding parts of the rigged skeleton. The different parts of the body would then be in the right positions and with the combination of them and the skeleton, I could “animate” this 2D, static image as a “3D” moving figure. It sounds complicated, I know, but I, more or less, know how everything would work and how I would do it; I just don’t know how fast I could make the process…

In addition, if this process were to work, I could save the resulting model of each person to play the game. With this, I could populate the virtual area with AI characters that were actually real people –the people who had just played the game! I felt this would add another quirky characteristic to the game’s “realism.”

So that covers the characters, now for the environment. Again thinking about physical/virtual, I wanted to create both the characters and the environments as closely to the real thing as possible, so wherever I ended up presenting this, I wanted to have a virtual model of the area. At this point, since I was thinking 2D, I figured I would take pictures of said area and create a 3D environment from that. There are many games that have done this before, one particular series that comes to mind is Final Fantasy. What’s done is that a 2D image is presented onscreen, however, the image has depth to it (as do most pictures). So while what’s seen onscreen is actually just a flat plane, the characters are able to move “back and forth” depth-wise through the image. Remember that the image is simply a flat plane, so it has no depth. The illusion of moving back and forth depth-wise is just that –an illusion. What’s really happening is that the characters are actually moving up and down in a 2D space but are being scaled as they move. So if a character is moving up (“away” from the camera and “back“ in space), the character is simply moving up in a 2D plane as well as being scaled smaller. Because things that are farther away are small perceptually, this scaling creates the illusion of moving back in space when in fact, that is not the case.

I tried this. I took a picture of the downstairs of my apartment, took four pictures of myself (from front, back, right, and left), and applied both the picture of my apartment as well as the pictures of me to two different planes. The plane with the apartment picture would never move while the plane with me applied to it would move according to what buttons I pressed. At the same time, the plane would change images depending on what direction I was moving (moving up would display my back, moving down would display my front, etc.). Additionally, I scaled the “me” plane as it moved up and down in space –getting bigger the further down it went and getting smaller the further up it went; this created the illusion of moving back and forth in space. However, I quickly realized there was a problem. I had placed the “me” plane in front of the apartment plane (think layers in Photoshop), otherwise the “me” plane would be behind the apartment plane and would therefore be unable to be seen. Because of this, the “me” plane was in front of everything in the apartment plane. The problem was that, even though making the “me” plane bigger and smaller created an illusionary depth, the “me” plane was in front of things like furniture when it should be that the furniture (in this case a table) was in front of me, and therefore blocking the lower half of my body. This was a big problem.

After thinking on it for a while, I came up with a solution, be it an overly complicated one (the kind of solutions I seem to excel at). I could take this 2D image of the apartment and create a 3D environment from it. With that 3D environment, I could actually move the character back and forth in space while still using a 2D image. It’ll make more sense once you look at the pictures below. So now that I had a 3D environment, I could now actually move the character back and forth in space, and I didn’t have to worry about making the character bigger or smaller. At the same time, this solved my dilemma with the “me” plane being in front of things that it shouldn’t have been. Now because I was moving back and forth in space, I could actually be “behind” the table.

From this, I produced my project proposal. I was planning on making the 3D model invisible (so that only the background image would be seen) and only using it as a way to detect collision (so the character wouldn’t move through objects). This, however, presented a problem as well. I had a 3D environment, but the image was still 2D. So while I could move “behind” the 3D table, it still appeared as though I was standing in front of the 2D image of the table. This was a problem, but the basic structure of what I was planning was there, I decided to solve the problem later after possibly getting some ideas from the proposal critique. In the proposal, I made it so that a button could be pressed to toggle the visibility of the 3D environment n and off. This way, I could show how I planned to solve the problem as well as how I intended it to look.

During the critique I got some thoughtful feedback. It was suggested that I should focus on the disconnect between the physical player and the virtual character –what is important about controlling a virtual character by moving your body just as you would to control your physical self? Why did I want to present this in the DMA crit room (which I did)? Why was the DMA room important to the piece? What significance would other locations have? Would the work the same, different, better, or worse than the DMA room? Think about incorporating some sort of two-player communication. Could the player perhaps be affecting something in real life? Could the non-players (other viewers) be affecting the player in some way? Virtually? Physically? Perhaps use some kind of physical restriction to highlight the virtual movement via the physical movement. Did the Balance Board fit this in that players would lean rather than walk in place in order to move the virtual character?

One suggestion in particular peaked my interest. I had already thought about moving the player physically in accordance to how the virtual character was moving, however, I had decided it couldn’t be done with my budget and timeframe. Had I had unlimited funds and time, I would have ideally investigated finding or building some sort of device that allowed such a feat. The player would be wearing LCD glasses so that the real environment would be blocked and the person could only see what was visible in the glasses. The person would start in the DMA room (for example) both in real life and in the game. The player would move the virtual character by leaning in different directions on the Balance Board. In addition to moving the virtual character around the virtual environment, it would also cause the movable device to move around the physical environment. So, for example, the player would start in the DMA room, but then would navigate the character to the printing studio on the other side of the third floor hallway. When the player took of the glasses, he or she would find him/herself some 100 feet away from where he/she started. I believed this would create in interesting sense of awareness, or lack thereof. While the virtual character and environment may resemble the real world, it still doesn’t look perfect on top of the fact that users are aware that what they’re seeing is not real; because of this, it is understandable to suspect that know one would expect to be moved from where they’re playing that game (as playing video games or watching something on a screen is a mostly stationary activity). So then what would it be like to find yourself in a different location when you took off your glasses. During your time playing the game, you had no knowledge of moving around in the real world, nor did would you expect it at all. How would you react when you found that you had unknowingly changed locations? Additionally, how you this talk to the idea of the disconnect or the relationship between virtual and physical. But again, this was all in an ideal situation…

For weeks after the critique, I had a frustratingly hard time deciding where I wanted to go with the piece conceptually. Eventually, I decided to put that on hold and work on getting the Balance Board to interface with both my computer and with Unity. I started by looking at UniWii, the Unity plugin that allowed interface and communication with the Wiimote. Unfortunately, that turned out to be a dead end. As of now, the only thing that UniWii does is work with the Wiimote and the nunchaku accessory; no Balance Board support. I then proceeded to search the Unity forums to see if 1) anyone had added Balance Board support to UniWii and 2) to see if anyone was even trying to use the Balance Board with Unity. After a few days of searching, I found that someone was using a program called OSCulator to connect their Balance Board to their Mac, so I checked out the program. I read a little of their forums and found that there were a few topics dedicated to the subject, so I went ahead and downloaded the app. I found some poorly-written step-by-step instructions on how to use the program to connect the Balance Board, but I found that I couldn’t get it to work… After some more searching, I found out that certain models of the Balance Board didn’t work with the program. I checked my model number, but couldn’t find any information on the forums about whether it worked or not. I then started a topic on the forums asking if my model Balance Board was supported. I explained that I was planning on using it in a project, and any information on the subject would be helpful. Not too long after I posted, the girl who (I believe) created the application responded informing me that, yes, there were some bugs, she’d have them worked out within the next few days.While waiting, I did some more searching on the subject and found that there was another program that people used to connect Balance Boards to Macs –Wiiji. Wiiji is a program created specifically to use the Wiimote and a few of its peripherals as joystick inputs for a Mac. I found a few Youtube videos explaining the process, and it seemed easy enough, so I downloaded Wiiji. After a few failed attempts at trying to connect the Balance Board, I found that there was a modded version of the program that allowed the Balance Board to work; the Board was not supported natively. The modded version was not easy to find, but I eventually found it and installed it, and sure enough, I got the Balance Board to connect! I then downloaded a free game for Mac that supports joystick input and tried out the Board. It worked great! The next step was to make it work with Unity.

I was pretty certain that Unity supported joystick inputs natively, so I researched that topic for a bit. I found that simply calling the default horizontal and vertical input axes should be what I was looking for. I pulled up one of my older Unity projects and whipped up a quick javascript code to allow an onscreen cube to move according to the joystick inputs. I connected the Balance Board, started the Unity project, and sure enough, it worked; the little cube was moving around just as I was leaning on the Board. This was a huge success for me! Despite it taking quite a while to figure out how to do it, actually making it work was TONS easier than I expected it to be; a simple blue-tooth connection rather than dozens of different classes and scripts that I would have to find, program, and modify. Again: HUGE success!

So now that I had the Balance Board working with Unity, I decided to make another build to see exactly everything would work. One day after class, I went outside and took some pictures of my apartment complex. After taking a few dozen pictures, I picked a few and started making 3D models from the 2D images. These models were more complicated than the original one I had made for my proposal. For that, I had simple taking cubes and cylinders straight from Unity and placed them according to the camera’s perspective, so really, they weren’t in the right places spatially, they just looked like it. This time around, I used Maya to construct the environment. I placed the camera at a distance and angle which mimicked (as closely as I could) the distance and angle of where I had taken the picture in real life. I then proceeded to model the environment.

At first I tried creating flat polygons on top of the image and then extruding the faces back into space. This didn’t work; things weren’t in the right place nor were they proportioned correctly. I tried a few other ways of creating the environment, all ending up in failure. Eventually, I found the solution. I created a ground polygon that laid flat on Maya’s grid. I then made polygons that were flat on this surface but fit the shapes and dimensions of the shapes in the image. Really what I was doing was recreating an accurate model of the environment, just viewing it from a particular angle. Of course, it wasn’t as ideal as this, so I had to stretch and squish a few objects so that they looked correct from the camera’s view.

After I finished two models (more or less the same area from two different angles), I put them into Unity and tried to piece them together. What I mean by that is that I had two different models that were of the same area, just from different angles, so I had to try to fit the two sets of shapes together so that it created one big composite model. I then took two cameras from Unity and placed them so that they mimicked the position and distance of the camera that took the pictures. Lastly, I inserted the 2D images in front of the cameras.

Now that I had the environment laid out the way I wanted it, it was time to put a character into the scene; a simple cube would do for now. I used the script I had made earlier to move the character. So now I had a cube moving around this environment according to my movement on the Balance Board. The next step was to change the camera angles depending on where the cube was located in the scene. Like I said, I had two cameras in the scene. One was from behind the apartments little 2-story gazebo and the other was in front of it. I made it so that when the cube moved in front of the gazebo (you couldn’t see it anymore because the gazebo was blocking the view), the game would switch to the other camera so that the cube could be seen again. The opposite happened when the cube moved back from behind the gazebo –it switched back to the original camera. Lastly, I added a feature that allowed the modeled environment to be visible/invisible and another to make the 2D images visible/invisible. And thus, my second demo was complete.

I showed this demo during our second crit and got some more feedback. There was a lot of talk about two things in particular. One was about disorientation. Could I /should I make it so that movement of the virtual character was disorienting to the player? What would that do? How would it feel? The other (semi-related to the previous suggestion) was about placing the Balance Board, and thusly the player, in a position high off of the ground. By doing this, I could place the player in a “position of power,” and possibly at the same time create an image of the player as a piece of art (by perhaps having the player stand on a traditional white pedestal r something of the like). At the same time, I could use to this place the projection (I had dumped the LCD glasses idea due to time and money) in some interesting places. Instead of just projecting up onto a wall (which would be boring and expected), I could perhaps project onto the ground, making the player look over the edge of whatever he or she was standing on in order to see the projection. Again, what would this do to the project, what would it mean to play like this, etc.?I was really fond of both suggestions, so I began thinking about how I would implement them into the project and what they would mean for it. This turned out to be much harder than I anticipated. In terms of just doing it, I ran into a few problems. If I were to raise the Balance Board off of the ground, I would have to make sure two things happened: one, that whatever someone was standing on could support that person’s body weight; and two, that it didn’t present a threat as far as falling off of the platform. Things only got worse when I realized that I really wanted people to be leaning over some sort of edge so that their balance was thrown off. At one point, I thought about presenting on top of one of the school’s parking garages, but I decided that would be far too dangerous. I also thought about something smaller; perhaps just using a white pedestal from the studio or building one of my own. That MIGHT work, but I felt I wanted the platform to be higher than just a foot or so off of the ground.

Also, I couldn’t figure out how I would or where I would place the projection… I wanted it to be below the player; I knew that much, but how would I get it there? If I projected from above the player, the person’s shadow would most likely get in the way. If I were somehow able to project from below, I’d have to build a tall platform that would allow the projector to be far enough below to display a sufficiently large image. Also, this structure would have to be strong enough to support both itself and a player. ALSO, the floor material would have to be at least semi-transparent so that the project could be seen. In an ideal situation, I could build a floor composed of either multiple flat-screen TVS with strong plastic over them or a floor of just strong plastic that the projection would shine onto. Of course, this could not happen… I thought about using mirrors to bounce the image from one location to the ground. This however, could very easily get expensive. Not only would I have to get the reflective glass, but I’d also have to build some sort of structures to hold the mirrors in the needed space and at the needed angles. Even then, I’d most likely have to get pretty large pieces of glass depending on how many times the image was reflected. And then there could still very well be the problem of shadows getting in the way. All in all not the best solution.

I then tried to think of a location that would lend itself to this sort of thing. Where could I present that would be 1) high off of the ground, 2) safe to stand, and 3) have room and need to lean forward to see the projection? I experimented with a few places in FAC. The first I thought of was the second-floor bridge that connects FAC to the library. I went up to the bridge and looked down at the courtyard. It was pretty impossible to lean forward due to the windows, so in order to see the projection, I would have to project it OUT onto the courtyard ground rather down straight down, below the bridge like I wanted. I moved over a little and examined the area of the bridge overhanging the ditch in front of FAC. This was gave me more distance, and thusly, resulted in me being able to project closer to the bridge, but it was still to far out nor did this really fix the leaning issue.

I then tried the stairwell. I went to the third floor and looked down the side of the stairs (the wall opposite the stairwell windows). I could place the projector down at the ground floor and project up the wall. This gave me two things. One, it allowed a safe area for people to lean over (the railing helped a lot), and two, is allowed for some nice distortion of the image which could play into the disorientation aspect of the piece. However, I thought this might be a bit TOO much in terms of disorientation; so much, in fact, that you probably would have no chance of knowing where you were or where you were moving.

I then tried something a little different. I really liked the idea of the player being on a white pedestal, so I went and got one from the studio. I tried using it with the previous two locations. On the bridge, it gave me more height, which allowed me to project closer to the building, and it also gave me a little more room to lean forward, but it still wasn’t as much as I wanted. I tried the stairs again. This was much better than the bridge in terms of height and leaning, but the distortion issue still seemed to be too big of a problem.After some more thought about the location, I came back and tried the DMA student work room. It was three stories up, and standing on the desks gave me a raised platform to stand on. The height was sufficient enough to look almost straight down, and leaning didn’t seem to be a problem as the windows gave enough room to do so. This seemed like the place to do it. It had been in the back of my mind most of this time, but I finally decided to use the Fine Arts complex model I had from our previous work on the ARTBASH game as the virtual location.

Unity didn’t like this. After much experimentation and trying different methods of running the game, I was finally forced to just use FAC rather than all four Fine Arts buildings. For whatever reason, I just couldn’t get Unity to run with all of the buildings in there… I had decided to project onto the woodshop roof as this was both directly in front of the DMA room window and it gave me a nice, big, flat surface to project onto. I also figured this could work with my concept. I had a fleeting idea of creating an environment that was comprised of lots of little buildings and the player moving a character around the roof (either in third or first-person) and crushing these little buildings, kind of like Godzilla or something. It was just a silly idea that seemed appropriate. The player would be really high up, looking down at smaller buildings. On top of this, the player would be controlling the movement of the character with his or her feet –perfect for stomping innocent bystanders and troublesome skyscrapers.

Funnily enough, this pretty strongly influenced my final idea. I decided to create the FAC model in a way that allowed it to be destructible. I wanted the player to be able to move around the building and knock over chairs, tables, doors, windows, walls, and eventually the whole building. I felt this could say something about a few things. Perhaps the fragility of art (the physical fragility of say something like the Mona Lisa), the unstableness of the art institute (both here at UF and in general), the delusions of grandeur of some artists, the seemingly higher importance of certain media over others, etc. In addition, I wanted the player to get bigger the more he or she destroyed. This would eventually cause the player to be so big that, even if he or she didn’t want to destroy anymore, simply turning or looking up would cause the entire building to collapse. I also thought that I might make multiple FACs. I thought about making an entire planet full of FAC buildings or perhaps making a kind of recursive structure so that the player would get bigger, and as when the current FAC became too small to interact with, the player would notice that he or she is in fact a small person inside of another (bigger) FAC, and it would go on like that. However, this couldn’t be done as I couldn’t even fit the four buildings into Unity, let alone an infinite number of FAC buildings. So I nixed the multiple buildings idea as well as the getting bigger idea.

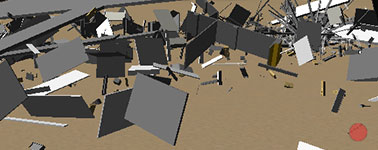

What I ended up doing was creating a large canyon far below FAC, so the building was essentially floating in midair. By doing this I had created a large area and a long drop for the broken pieces of FAC to fall. I also liked the idea of the player accidentally falling off of the building’s structure and falling into the pit. I made it so that, once the player fell past a certain point, the entire building would collapse. If the player were to be looking at the building when this happened, it would be a pretty neat sight as all of the components of the building –the walls, the windows, the furniture, the floor, etc. –all exploded and came tumbling down with the player.

I felt that this particular part of the concept needed work, but I had a rough idea of what I wanted this to be. It seemed to me that the point of the game was to destroy the entirety of the building while still staying afloat. This proved to be not only difficult, as the more the player moved, the less there would be to stand on, but also it eventually became near impossible. Sooner or later the player would find that there was no more ground to walk on or perhaps the floor would just fall out beneath him or her. I felt this could have something to do with the pompous artist and his/her realization that he/she is not as grand as previously thought. A somber thought, but I felt it could be appropriate in certain instances, though probably not as often as I’m making it sound. Again, the concept needed some work, but it seemed like an okay starting place.

So, in the end, I presented in the DMA student work room. The Balance Board was placed on the desks counter looking out the window. The projection was displayed on top of the wood shop building. The player stood on the Balance Board and looked out the window, down at the roof of the building. The game started in the exact same spot in the virtual FAC, and looked out of the same window down at the wood shop roof. The player then could freely move around the DMA room by leaning in different directions on the Balance Board; also, when the character moved the camera (FPS camera) would also tilt in the direction the player was moving. Because of this, the player had to regulate between moving and seeing where he or she was going, or even perhaps just charging forward without a knowing what was ahead. Small objects had to be knocked around (chairs, desks, the couch) 100 times. After that, bigger objects would start being moved by the character (table tops, bookshelves, doors, walls, etc.). At this point, the player could navigate around the entire building (before the door to the DMA room had been shut). The player can continue going through the building, destroying everything he or she touches until finally, the player falls through the building and begins to fall to the canyon below. Again, once the character fell a certain distance, the entire FAC building would collapse and the pieces would tumble down with the player. Most likely, the player would reach the ground below first, at which point the pieces of the building would rain down around the character. Once the character reached the ground, a restart button would appear in the bottom left of the screen; the player could choose to restart the game at this point via the button or continue exploring the rubble and the canyon.

Added Tuesday, February 21st, 2012 @ 9:54 PM

If I remember correctly, the assignment for this project was to make a piece of “wearable technology.”

Though not by name, AU is a spiritual piece of the WiiMersioN Project. My goal was to create a device that would allow a user to view a 3D environment using his/her head movement to change the direction of the virtual camera. Essentially, I wanted to make a virtual reality helmet, and that’s pretty much exactly what I did :D. Using two Wii remotes, a bike helmet, Unity, a pair of VR glasses (glasses with little screens in them) that I borrowed from a friend, lots of math, and good ole fashioned duct tape, I created a device that allows users to immerse themselves in a virtual world.

Aside from the plugin that allows Unity to communicate with the Wiimotes, I created everything. I think… I can’t remember if I modeled the 3D room or not. I certainly have modeled that room for other projects, but for this one I may have used a preexisting model that another student made…

Added Tuesday, February 21st, 2012 @ 9:26 PM

The WiiMersioN project is the first in a set of three projects (all carrying the “WiiMersioN” name) I created using Nintendo Wii controllers as a type of “augmented” reality through real life motions corresponding to the same motions onscreen. With these projects I was basically challenging myself to see how closely I could mimic real life through video games. To think of it in extreme terms, I was trying to accomplish full virtual/augmented reality by using the tools at my disposal.

The WiiMersioN Project begins by displaying the 3D-rendered logo along with onscreen instructions prompting players to connect a Wii remote to the computer via bluetooth. The game alerts the player when the Wii remote successfully connects then prompts him or her to connect a second Wii remote. After both Wii remotes are synced, the player can press the + button to begin the game.

The game is an interactive crystal image. The player holds one Wii remote in each hand in real life in order to control the virtual hands onscreen. There are also two virtual Wii remotes on a table that the player can pick up (while using the real Wii remotes to instruct the virtual arms to do so). Lastly, there is a TV screen past the table. The TV displays exactly what the player is looking at—the game! Of course inside that game is another instance of the game, and so an and so forth.

The only objects I didn’t model are the two Wii remote models. As far as coding, I found a plugin for Unity that allowed connection with Wii remotes, but I modified it, added to it, and used it to fit my needs.

ORIGINAL DOCUMENTATION

Original Idea

(In an e-mail to professor)

Hi, Jack, this is Adam Grayson. I’d just like to run this project idea by you before class Tuesday to see what you think.

So what I’ve been thinking is that I can make a game that implements two Wii remotes. The player would hold one in eaach hand. These Wiimotes would correspond to two arms/hands that are on-screen. The movement of the virtual hands would respond to the movements of the player’s hands via the two Wiimotes. So, for example, if the player moves his/her left hand forward, the left virtual hand would move forward. In order to grab objects in-game, I was thinking that the player could press/hold the B button on the Wiimote. This button is on the bottom of the controller as a sort of trigger. I think this is a good simulation of one grasping something. So when the B button is pressed, the virtual hand makes a grasping gesture; if some object is in front of the hand when the button is pressed, the object will be picked up. In order to release the object, the player would release the B button.

The movements of the virtual hands would respond to the three axises of direction that the player can move the Wiimotes as well as rotational movement.

I’m not sure if I will be able to implement this into the game, but later this year, Nintendo is coming out with the Wii Motion Plus. This is a device that plugs into the bottom of the Wiimote and more accurately interprets movement made by the player. As it stands now, the Wiimote does not offer true 1!1 movement, however, with the Wii Motion Plus, 1:1 will be possible. Obviously this could help me in interpreting movements by the player, but I’m not sure if I’ll be able to implement this as there is no release date for the device other than sometime this year.

What I’m not sure about as of now is how this technology would be used in the game. Perhaps it could just be a sandbox game where you are put in a certain open environment and there are objects around that you can interact with. Maybe I could simulate drawing with this, so that you could pick up a piece of paper and a pencil and “draw” (you would see the virtual hand drawing rather than just lines appearing on the paper). I could base this around some sort of puzzle that must be solved.

I think what I really like about this idea is the immersion aspect of it. With the use of the controls and the fact that the player can see “their own” hands in the game, I think this brings the player into the game more than usual. I don’t know if this will come up, but I’d also like to implement a system that allows the player to see themselves if they look into a reflective surface. Usually in games, if the player looks into a reflective surface, such as a mirror, the modeled character is seen in the reflection. Instead of doing this, I’d like to use some sort of camera that looks at the player and feeds the video into the game in real-time. So, say someone were to play this on my laptop (it has a built in iSight). The iSight would be recording video that would be fed into the game. If the player looked into a mirror, they would not see a rendered model, but rather see themselves in real life via the iSight. Perhaps I could also implement some sort of chroma keyer in order to get rid of the background environment (real life) that the player is in and put in the background of the virtual environment.

Concept

The concept behind this project is to recreate human movement in a game scenario, focusing on the movement of the arms, hands, and fingers of an individual. In order to do this, I am planning on using two Wii remotes as game controllers. One remote will be held in each hand. The remotes will correspond to the movements of the in-game arms and hands through the use of rotation, motion-sensing, and IR sensing.

This will be a 3D-modeled game in a semi-confined environment (in a room; size has yet to be decided). As of now, the style of the visuals is planned to be realistic, however it is not set in stone and may change throughout the project development.

In addition to moving the character’s upper limbs, the player will be able to move the character around this virtual environment.

Controls

As of now, there are two planned stages of movement: Free Movement and Held Movement. Free Movement would be the normal movement of the in-game limbs as the player moves the character around the space. In this mode, the player will be able to move the arms of the character (again through rotation, motion-sensing, and IR sensing). Held Movement will be the item-specific movement of the limbs after the player has picked up an in-game object. For example, if the player were to pick up a virtual NES controller, the movements and button presses of the remotes will more specifically correspond to the fingers rather than the previous control over the entirety of the arms.

Transition from Free Movement to Held Movement

The player begins in Free Movement. In order to enter into Held Movement, the player will press the A and B buttons on the Wii remote. Each hand is controlled independently, so one hand can be in Free Movement while the other is in Held Movement.

Held Movement Specific Controls

Held Movement, while being primarily the same, will differ slightly depending on what object the player is holding in-game. Following the example given above, if the player picks up a virtual NES controller, Held Movement will be entered. An NES controller is primarily controlled with the thumb of each hand. Because of this, either the rotation or IR of the Wii remotes will be used to move the corresponding thumb (it will be decided later which control scheme works better). Moving the Wii remotes in this fashion will move the thumbs in a two-dimensional plane hovering over the NES buttons. In order to make the thumb press down, the player will press the A button on the Wii remote. Depending on whether the thumb is currently over a button, the thumb could either press down the button or press down on the controller, missing the button. In order to release the NES controller (exiting Held Movement) and return to Free Movement, the player will press the A and B buttons on the Wii remote simultaneously. Again, this can be done separately with each hand if so desired.

Character Movement

This area is still under development. I realized that the player is already using both hands to control the virtual arms. In addition to this every function of the Wii remote is being used for this as well, so there are no functions available to control character movement around the virtual space. I have thought about implementing the Wii Balance Board to solve this problem. The Balance Board is able to sense the weight distribution of a person standing on it. So, for example, if the person is leaning to the left, the Balance Board detects this. Through this detection of body movement, the in-game character could be controlled. However, as is tradition now, there are two ways to move a character –directional movement and rotational movement (these are controlled with the dual-joystick setup, or, in the case of computer games, the mouse and the directional/WASD keys). The problem is that the Balance Board could only be used for one of these controls, not both. So this method could only control the way the character turned OR the way the character walked.

There is a head-tracking technology available for the Wii and its remotes. This technique uses IR sensing. With this, it is feasible to control the rotational movement of the character in addition to the Balance Board which wold control the directional movement. This would be an ideal situation, but I am not sure if it is realistic for this particular project.

Again, these are ideas, and this section is still under development.

Additional Ideas

An idea that may or may not be implemented into the game is the use of a webcam which feeds video directly into the game. If the player moves the character in such a way so as a reflective object is seen on-screen, the object will not reflect the in-game character’s 3D model, but will instead “reflect” the video being received from the computer’s webcam. This webcam will be facing the player while he/she is playing. Thusly, the video will be of the player in real life; this will be what is reflected in the reflective object. This is to suggest that the player him/herself is the character in this virtual environment rather than a fictitious 3D character.

Progress Report and Extraneous Notes

3/17/09

- began working on 3d hands in maya

3/26/09

- rotation works (simple C# console output)

- X and Y AccelRAW data states make sense

- seem to vary from 100-160

- rest state is 130

- not sure what Z does yet… (don’t think i’ll need it)

- X and Y AccelRAW data states make sense

- rotation will be used for rotation of the wrist and arm

3/31/09

- built simple arm and hand for use in testing uniWii and the results i found last week

- applied wiimote script packaged with uniWii to arm

- able to control arm through tilt/accelerometer in wiimote

- pitch and roll/X-axis and Z-axis rotation

- able to control arm through tilt/accelerometer in wiimote

- applied wiimote script packaged with uniWii to arm

- made a script that would allow suspension of arm movement in return for hand-only movement when the A button was pressed

- hand moved around same pivot point as the arm, so is looked odd

4/2/09

- looked into using two wiimotes

- couldn’t really find anything

- tried to connect two just to see what would happen

- both connected perfectly, no extra work involved

- applied working method to two arms/hands

- operated with two wiimotes

4/7/09

- began to look into operation via IR position rather than accelerometers

- began reading through the uniWii/wiimote code to understand what was going on

- realized where the rotations were being made in the code, but wasn’t certain as to the specifics

- did some research on the methods/variables used around the piece of code

- got the gist of how the code worked

- tried to implement IR into the rotation code

- no luck

- code was getting a little complicated so i started a new scene with just a cube and worked on trying to move the cube with IR

- began reading through the uniWii/wiimote code to understand what was going on

4/14/09

- according to the code output, i got the IR to work, but i was seeing no visible sign of it working

- after much research and trial and error, i realized that the measurements being returned were between -1 and +1

- thusly, i WAS rotating, just by very small amounts

- after much research and trial and error, i realized that the measurements being returned were between -1 and +1

- created a separate pivot point for the hand

- hand now rotated in a more realistic fashion

4/16/09

- worked on getting the arms to not rotate so many times when the cursor went on/off screen

- realized that, while i was multiplying the IR by 100 (because the RAW measurements were between -1 and +1), the RAW measurement for being off screen was -100

- so rotation while the cursor was on screen was working fine, but as soon as the cursor went off screen, i was rotating the arms 10,000 degrees

- worked on making use of a variable to stop this over-rotation

- worked on making use of a variable that would return the rotation to 0 is the cursor went off screen

- so rotation while the cursor was on screen was working fine, but as soon as the cursor went off screen, i was rotating the arms 10,000 degrees

4/21/09

- managed to get unity pro trial via email with unity staff

- got it working on my laptop

- began working on project on laptop

- had a lot of trouble getting wiimotes to connect to laptop

- when they did connect, most of the time, the IR functionality did not work

- went through a lot of trouble shooting to try to get this working

- nothing ended up working

- realized i would not be able to use my laptop for unity work

- when they did connect, most of the time, the IR functionality did not work

4/23/09

- worked on different models in maya for possible use with hands in unity (piano keyboard, arcade button and joystick, tv remote, tv/tv cabinet, etc.)

- continued working on 3d hand model

4/24/09

- only able to pick up left wiimote with either hand

- began working on picking up either wiimote with left hand

4/25/09

- lab was closed

- worked on models some in maya

- figured i could do recursion with duplicates of what i already had

- make a hole in the tv where the screen would be, and just place the duplicates behind it

- warp these duplicates according to perspective to make them look correct

- ended up being REALLY huge and REALLY far out despite them looking small

- warp these duplicates according to perspective to make them look correct

- planned on testing this next time i’m in the lab

- make a hole in the tv where the screen would be, and just place the duplicates behind it

4/26/09

- able to pick up either wiimote with either hand

- however, very often both wiimotes would occupy one hand

- as soon as a wiimote touched a rigid body (and B was held on a wiimote), it would automatically go to the hand holding/pressing B

- worked on solving this problem all day

- resulted in nothing but me being confused

4/27/09

- continued working on only being able to hold one wiimote in one hand

- tried many different scripts, mixing variables, commenting out code, etc.

- tried using global variables from a static class

- kind of worked, but when wiimotes were held in both hands, one would not stay kinematic –gravity would instantly affect it, and resulted in not being to hold it

- some more tinkering

- eventually got it to work by using very specific else if statements

- continued working on recursion

- planned to duplicate what’s seen in front of the camera about five times (initial estimate) and move it off screen

- (different from what i had already done)

- each of the duplicates would have a camera that would output to a texture which the previous duplicate’s tv would display

- tv1 would display output from camera2, tv2 would display output from camera3, etc

- continue until recursions could no longer be seen/distinguished

- after much time put into this process, i realized that, because i was duplicating these objects, i couldnt have the cameras outputting to different textures, nor could the tvs display different things

- each camera would output to the same texture, and each tv would display the same thing

- effectively only using two of the duplicates going back and forth rather than using all of them as i had expected

- tried to make “different” scenes in maya

- same scenes, just different named files so i wouldnt be duplicating

- this worked fine, but the game took a massive dive in frame rate

- about 2-3 fps

- same scenes, just different named files so i wouldnt be duplicating

- because of this, decided against using multiple “scenes,” cameras, textures, etc.

- went back to using two cameras with one “scene”

- planned to duplicate what’s seen in front of the camera about five times (initial estimate) and move it off screen

4/28/09

- began working on main screen

- wanted to use the logo i made along with the hand that i had modeled

- rigged the hand in maya and positioned it to match the drawing

- used the wiimote .obj file i had and positioned it to match the drawing

- created rings and balls and positioned them to match the drawing

- created motion paths for balls to move around the rings

- played around with different materials for the objects in the scene

- decided on white black and white lamberts with toon outlines on the balls

- when transfered from my laptop to the classroom computer, the rigged hand mesh resulted in some very messed up geometry

- went back to my laptop and deleted history on hand mesh

- this resulted in a loss of animation of the hand (because i had animated the skeleton)

- fortunately, the mesh kept the pose in which it was rigged, so i simply animated it as the skeleton was animated

- unity did not like the motion paths or the outlines on the balls

- went back into maya to remove the toon effects and hand key every frame of the balls’ paths

- after this, unity did not have a problem with this scene

- import and animation in unity worked fine

- looped animation

- wanted to use the logo i made along with the hand that i had modeled

- during the main screen, i wanted the wiimotes to be used as mice inputs in order to through around some ragdolls or rigid bodies before the actual game

- kind of like a preloader

- looked at dragRigidBody script to see if i could simply replace the mouseDown event with a button press event on the wiimotes

- after some research and a few experiments, i realized that it was too much work, and i would have to basically redo everything i had done for the main game in order for this to work

- decided against ragdoll/rigidbody preloading actions

- continued to finish steps for main screen

- after some research and a few experiments, i realized that it was too much work, and i would have to basically redo everything i had done for the main game in order for this to work

- main screens purpose was to sync wiimotes before game started as well as have cool opening design

- went into photoshop to create text prompts for users to connect and sync wiimotes to game

- placed these prompts onto planes in maya

- originally tried to animate transparency of planes in maya so that they would have a slight flashing animation

- unity did not translate the transparency animation

- then tried to place text around cylinders so that the text would orbit around the hand like the rings

- animated the half-cylinders to rotate

- unity did not like this animation either

- instead of animating where the objects were placed in unity, the objects were moved to different positions while animating (only while animating)

- this position could not be moved

- decided to just use static planes for text prompts

- originally tried to animate transparency of planes in maya so that they would have a slight flashing animation

- began to write script for execution of prompts

- as user synced wiimotes/pressed buttons, prompts would (dis)appear from the camera view

- last prompt/button press loads the game scene

- worked on some final tweaks (removed displayed info from game scene, fixed main screen cursors to say “left” and “right,” took a last look over code)

- added two audio files to project

- one for main screen, and one for game scene

- added script in game scene that returns to main screen if either wiimote disconnects

- built game for final testing

- game somehow (and thankfully) managed to work on my laptop despite it refusing to do so as of late

- built game as OSX universal, windows .exe, and web player

- used game logo as application icon for the three versions

- DONE!

Links To Game

Mac OSX .app Windows .exe Web player

- Note – The web player may not work. I tried it earlier, and I think the problem is the bluetooth. If I can get it to work, I’ll definitely update it.

- Note – The Windows version may have missing prompts in the Main Menu (sometimes they appear, sometimes they don’t; seems dependent on the computer…)

- If you have any trouble with the game, please refer to the instructions